Studio News

Fluency from Fragments

1. Introduction

Georgian remains one of the most structurally disadvantaged languages in modern large language model development. Its Mkhedruli script occupies the three-byte range of UTF-8 (U+10D0–U+10FF), meaning raw Georgian text consumes three times the byte budget of equivalent English text before any model processing occurs. BPE tokenizers used by all major LLMs were trained on corpora in which Georgian constitutes a negligible fraction, so individual Georgian words are fragmented into five to eight tokens on average, compared to one or two for English. The practical consequence is that a Georgian prompt consumes roughly four to six times more tokens than an English prompt conveying the same content, shrinking the effective context window proportionally and inflating both training and inference costs.

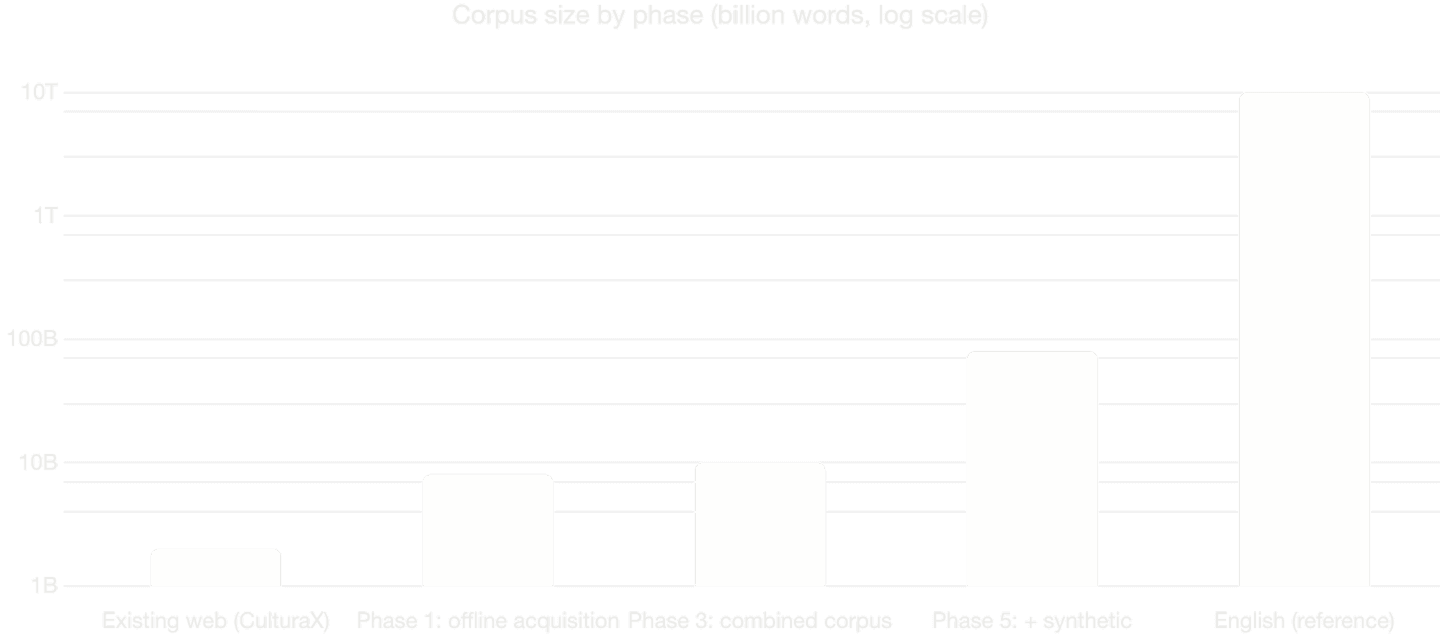

Compounding the tokenization problem is data scarcity. Georgian is spoken by approximately 3.7 million people. The CulturaX dataset, which represents near-total coverage of publicly available Georgian web text, contains approximately two billion words — roughly 0.02% of the English training volume available to frontier models. We have observed that models such as Gemini, which currently perform best on Georgian, appear to have reached a performance ceiling, likely because all gatherable web data has already been consumed.

A third structural issue is translation asymmetry. Current LLMs translate competently from Georgian to English but perform poorly in the reverse direction, a direct consequence of having seen enough Georgian to extract meaning but not enough to internalize its rich morphological and syntactic structure for generation.

This paper presents our plan for addressing all three dimensions — tokenizer inefficiency, data scarcity, and generation quality — through a six-phase pipeline built on Qwen 3.5-2B-Base as the foundation model.

2. Choice of Base Model

We select Qwen 3.5-2B-Base (Qwen Team, 2026) as our starting architecture. Key specifications drawn from the model card:

Parameters: 2B

Hidden dimension: 2048

Layers: 24, arranged as 6 × (3 × (Gated DeltaNet → FFN) → 1 × (Gated Attention → FFN))

Vocabulary: 248,320 tokens (padded), with tied input/output embeddings

Context length: 262,144 tokens natively, extensible to over one million

License: Apache 2.0

Several properties make this model well suited to our task. First, the 2B parameter scale allows rapid iteration on a small number of GPUs while remaining large enough to exhibit meaningful language modeling capabilities. Second, the tied embedding design means that tokenizer modifications affect a single matrix rather than separate input and output projections, simplifying the vocabulary adaptation pipeline. Third, the 248K vocabulary is large — substantially larger than Llama 3's 128K — which means it already contains more multilingual coverage, but Georgian remains severely underrepresented. Fourth, the hybrid Gated DeltaNet and Gated Attention architecture offers efficient long-context processing, which directly benefits our use case given that Georgian text occupies disproportionate sequence length under existing tokenizers.

A critical consideration for small models is the ratio of embedding parameters to total parameters. At 248,320 vocabulary entries with a 2,048-dimensional embedding, the embedding matrix alone accounts for approximately 509 million parameters — roughly a quarter of the model. This makes vocabulary pruning especially impactful: removing unnecessary tokens directly reduces the model's memory footprint and frees capacity for Georgian-specific tokens.

3. Phase 1 — Data Collection and Processing

The foundation of this project is a large-scale data acquisition effort targeting Georgian text that exists outside the publicly crawled web. Over the course of several months, we have identified and begun acquiring more than 300,000 non-copyrighted works spanning books, newspapers, academic journals, short stories, and poetry.

Approximately 60% of these documents exist only as scanned images or PDFs without embedded text layers, requiring optical character recognition. We use Google Vision OCR for this task, which handles Georgian script with high accuracy due to the regularity and distinctiveness of the Mkhedruli alphabet. The remaining 40% have been exported, chunked into training-appropriate segments, and cleaned of formatting artifacts.

In addition, several Georgian companies have contributed internal documentation, correspondence, and domain-specific text, adding both volume and genre diversity covering technical, business, and administrative registers.

Our projected total corpus size is approximately eight billion words. Combined with the roughly two billion words from CulturaX, this yields a working corpus of approximately ten billion Georgian words — a five-fold increase over what any existing model has likely been trained on.

4. Phase 2 — Tokenizer Adaptation

This phase is technically the most critical. We perform two operations on the Qwen 3.5 tokenizer: vocabulary pruning followed by vocabulary extension with Georgian tokens.

4.1 Vocabulary Pruning

The Qwen 3.5 vocabulary contains 248,320 tokens. A large fraction encode Chinese, Japanese, Korean, Arabic, Hindi, and other scripts irrelevant to a Georgian-English bilingual system. We employ leaf-based frequency pruning as described by Purason et al. (2025), which iteratively removes only tokens that are leaves in the BPE merge graph — tokens that do not participate as components in any other merge operation. This preserves the structural integrity of the tokenizer and avoids creating unreachable tokens, a known failure mode of naive frequency-based or last-N pruning. Research demonstrates that up to 62.5% of tokens can be removed this way without measurable degradation in downstream performance on retained languages.

We construct the pruning frequency table from a 50–50 mix of Georgian and English text to ensure that tokens needed for both languages are preserved.

4.2 Vocabulary Extension

After pruning, we extend the vocabulary with Georgian tokens targeting approximately 40% Georgian composition. Rather than the naive approach of training a separate Georgian tokenizer and appending non-overlapping tokens — which Purason et al. (2025) demonstrated produces 4–10% unreachable tokens across 70 languages — we use continued BPE training. This method resumes the BPE merge learning process directly on Georgian text, starting from the existing tokenizer's merge state. Every new merge operation is guaranteed compatible with the existing merge sequence, producing zero unreachable tokens across all tested languages.

4.3 Expected Compression Gains

With the default Qwen tokenizer, Georgian text achieves a compression ratio of approximately 2.6 bytes per token. After pruning and extending with approximately 16,000 Georgian tokens via continued BPE training, we project compression improving to approximately 4.4–4.5 bytes per token — a roughly 69% improvement. This translates directly to shorter sequences, faster training, faster inference, and a larger effective context window.

4.4 Embedding Initialization

After extending the vocabulary, new token embeddings are initialized using Fast Vocabulary Transfer (FVT; Gee et al., 2022), which copies embeddings directly for tokens that existed in the original vocabulary and initializes new tokens by averaging the embeddings of their constituent sub-tokens under the original tokenizer. This provides a warm start that substantially reduces the continued pre-training budget needed to bring new tokens to parity.

5. Phase 3 — Continued Pre-training

With the adapted tokenizer in place, we perform continued pre-training on our full bilingual corpus: approximately ten billion words of Georgian and a matched volume of English from Fineweb, maintaining a balanced bilingual mix. This balance is essential — if Georgian dominates, the model's English capabilities degrade; if English dominates, the model fails to internalize Georgian structure.

The model architecture remains unchanged. We use the original pre-trained weights with the modified embedding layer. Training proceeds with standard hyperparameters: cosine learning rate schedule with warmup, AdamW optimizer, and sequence length of 4,096 tokens. Because our adapted tokenizer compresses Georgian text more efficiently, the same ten billion words are encoded into fewer tokens than they would have been with the original tokenizer. Based on comparable experiments with Llama 3.2-1B (Purason et al., 2025), we expect approximately a 26% reduction in total training tokens, translating directly to reduced GPU-hours.

6. Phase 4 — Translation Fine-tuning

This phase exploits a specific asymmetry in existing LLM capabilities. Models like Gemini translate Georgian to English competently. We generate a large parallel dataset by having Gemini translate Georgian texts into English. These translations are high quality because Georgian-to-English is the direction in which current models are strong.

We then reverse this corpus: the English translations become the source, and the original Georgian texts become the target. We fine-tune our continued pre-trained model on this reversed corpus for English-to-Georgian translation. This bypasses the fundamental bottleneck — we do not need a model that is already good at generating Georgian; we only need one that is good at understanding it, which existing models already are.

7. Phase 5 — Synthetic Data Generation via Constrained Translation

Once we have a competent English-to-Georgian translation system, we use it to scale our Georgian corpus by a factor of five to ten. The source material is high-quality English text — books, articles, technical documents, educational content — which exists in virtually unlimited supply.

The critical distinction from unconstrained synthetic data generation is that translation is bounded by its source. The ideas, structure, argumentation, and factual content all originate from genuine human-written English text. The model's task is limited to rendering that content in Georgian. This constraint means linguistic diversity is bounded by the diversity of the English source, which is vast, rather than by the model's own generative tendencies — avoiding the well-documented phenomenon of model collapse.

The resulting synthetic Georgian corpus of 50 to 100 billion words would represent an unprecedented volume of Georgian-language training data.

8. Phase 6 — Large Model Training

With a corpus of 60 to 110 billion Georgian words — authentic and translated combined — alongside matched English data, we are positioned to train a substantially larger model. The tokenizer adapted in Phase 2 carries forward, meaning the training efficiency gains compound. The synthetic data from Phase 5 provides domain coverage that Georgian has never had at scale: scientific text, technical documentation, legal language, medical terminology.

The specific architecture and parameter count will be determined by quality metrics achieved in preceding phases and available compute budget.

9. Related Work

Our approach draws on two primary lines of recent research.

Trans-tokenization (Remy et al., 2024) demonstrated that cross-lingual vocabulary transfer through SMT-based token alignment can effectively adapt GPT-style LLMs to unseen languages with distinct scripts. Their Tweeties series of models and Hydra LLMs showed competitive performance across low-resource and mid-resource settings, achieving state-of-the-art zero-shot machine translation for Tatar. Their key insight — that evidence-based SMT mappings outperform character-based embedding initialization for autoregressive models — informs our embedding initialization strategy. However, where trans-tokenization focuses on mapping between source and target vocabularies using parallel corpora, our approach uses continued BPE training to create new tokens that are natively compatible with the existing merge sequence, avoiding the need for parallel-corpus-based alignment at the tokenizer level.

Efficient tokenizer adaptation (Purason et al., 2025) introduced continued BPE training for vocabulary extension and leaf-based frequency pruning for vocabulary reduction. Their experiments across 70 languages and four model families demonstrated that continued BPE training yields up to 9.6% higher compression than naive extension while producing zero unreachable tokens, and that leaf-based pruning permits removing up to 62.5% of vocabulary without downstream degradation. We adopt both methods directly.

10. Summary

The Georgian language faces a structural disadvantage across three dimensions: encoding cost, data availability, and generation capability. Our six-phase plan addresses each dimension through a sequence of interventions that independently improve the situation and collectively transform it. Tokenizer adaptation through leaf-based pruning and continued BPE training reduces the tokenization cost of Georgian by approximately 69%. Large-scale data acquisition expands the available corpus by a factor of five. A translation pipeline exploiting the existing strength of LLMs in the Georgian-to-English direction creates a competent Georgian generator without requiring one to exist first. And constrained synthetic data generation through translation scales the corpus by another order of magnitude without the quality degradation associated with unconstrained generation.

The end result is a path from two billion words of web-crawled Georgian and a tokenizer that fragments every word into six tokens, to over sixty billion words of diverse Georgian text and a tokenizer that processes the language at close to English-level efficiency — built on the efficient, open-source Qwen 3.5-2B architecture.

References

Gee, L., Zugarini, A., Rigutini, L., & Torroni, P. (2022). Fast vocabulary transfer for language model compression. EMNLP Industry Track.

Purason, T., Chizhov, P., Yamshchikov, I. P., & Fishel, M. (2025). Teaching old tokenizers new words: Efficient tokenizer adaptation for pre-trained models. arXiv:2512.03989.

Qwen Team. (2026). Qwen3.5: Towards native multimodal agents. https://qwen.ai/blog?id=qwen3.5

Remy, F., Delobelle, P., Avetisyan, H., Khabibullina, A., de Lhoneux, M., & Demeester, T. (2024). Trans-tokenization and cross-lingual vocabulary transfers: Language adaptation of LLMs for low-resource NLP. COLM 2024.